AI brain fry: When the tools start running your day

It was promised to make your life easier. It promised abundance. It promised leisure.

What many workers are finding instead is a new strain of exhaustion that people are calling “brain fry.”

Early adopters in tech first sounded the alarm, but the strain is spreading to roles across industries where AI is woven into daily workflows.

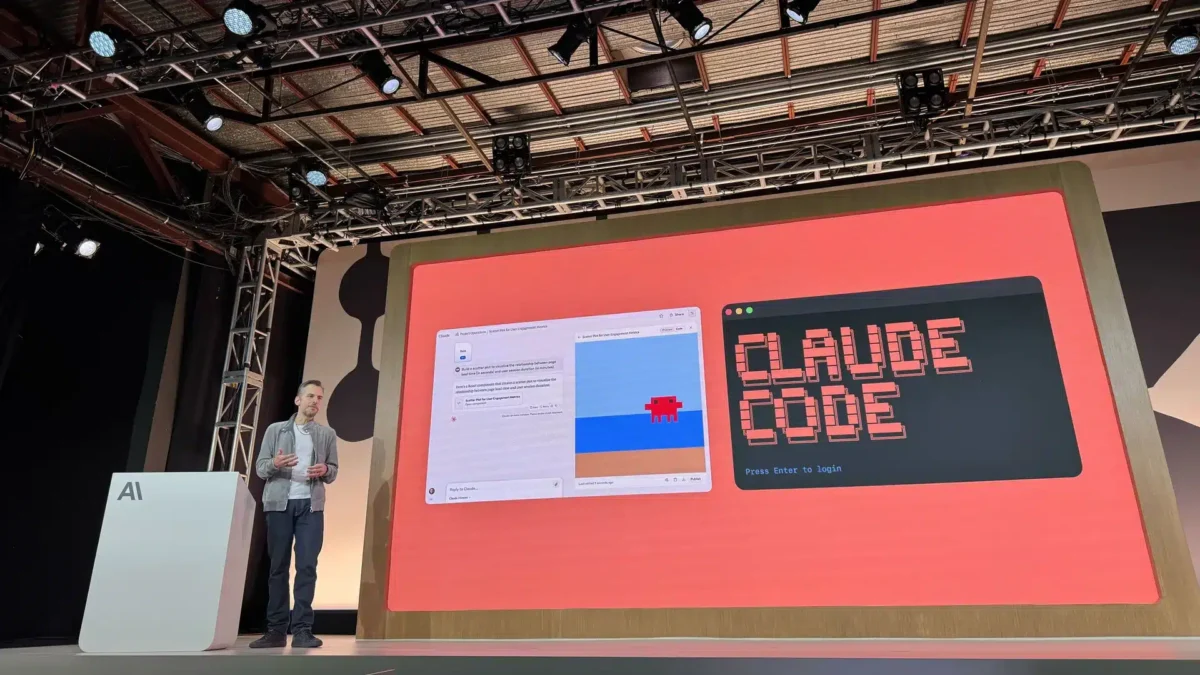

Hard-core adopters complain about too many lines of code to analyze, armies of AI assistants to wrangle, and lengthy prompts that take as much thought as the original task did.

Consultants at Boston Consulting Group have labelled this mental overload “AI brain fry,” describing it as a state of cognitive exhaustion from supervising AI tools pushed past human limits.

The rise of autonomous AI agents changes the work dynamic: instead of doing tasks yourself, you now manage fast digital workers that need direction and correction.

“You have to really babysit these models,” said Ben Wigler, co-founder of the start-up LoveMind AI.

That supervision is not light work. People building legions of agents spend hours keeping them on track, which creates a steady, draining oversight burden rather than freeing up time.

“That’s what’s causing the burnout,” Norton wrote in an X post.

For some, the situation is worst in software development, where AI rapidly improved at producing code but did not eliminate the need for careful human review.

“The cruel irony is that AI-generated code requires more careful review than human-written code,” software engineer Siddhant Khare wrote in a blog post.

Code produced by models can introduce subtle security flaws, hidden logic errors, or design choices no one fully understands, so the apparent speed gains can come with bigger downstream costs.

“It is very scary to commit to hundreds of lines of AI-written code because there is a risk of security flaws or simply not understanding the entire codebase,” added Adam Mackintosh, a programmer for a Canadian company.

If AI agents stray from the intended goal, they can chew through compute and budget chasing the wrong path, which means oversight must be constant and precise.

That constant vigilance bleeds into personal time. Teams chasing productivity gains can end up working longer hours because the tools make it easy to push further into the night.

“There is a unique kind of reward hacking that can go on when you have productivity at the scale that encourages even later hours,” Wigler said.

Some workers describe marathon sessions troubleshooting large swaths of machine-assisted work: one engineer spent 15 consecutive hours fine-tuning about 25,000 lines of code and hit a hard limit.

“At the end, I felt like I couldn’t code anymore,” he recalled.

“I could tell my dopamine was shot because I was irritable and didn’t want to answer basic questions about my day.”

Others report similar trouble switching off: a musician and teacher who asked to remain anonymous said evenings meant experimenting with AI instead of resting.

Still, many interviewed for coverage retain a positive view of AI’s potential, even while warning about the human cost of constant supervision and tinkering.

BCG’s survey of 1,488 professionals in the United States actually found a decline in burnout rates when AI handled repetitive tasks, suggesting benefits exist alongside the new risks.

To manage those risks, the study recommends leaders set clear boundaries on how employees use and oversee AI and define where human judgment must remain central.

“That self-care piece is not really an America workplace value,” Wigler said.

Given the tension between productivity tools and human limits, organizations will need to design workflows that protect attention rather than just chase efficiency.